Developer Tools

Free

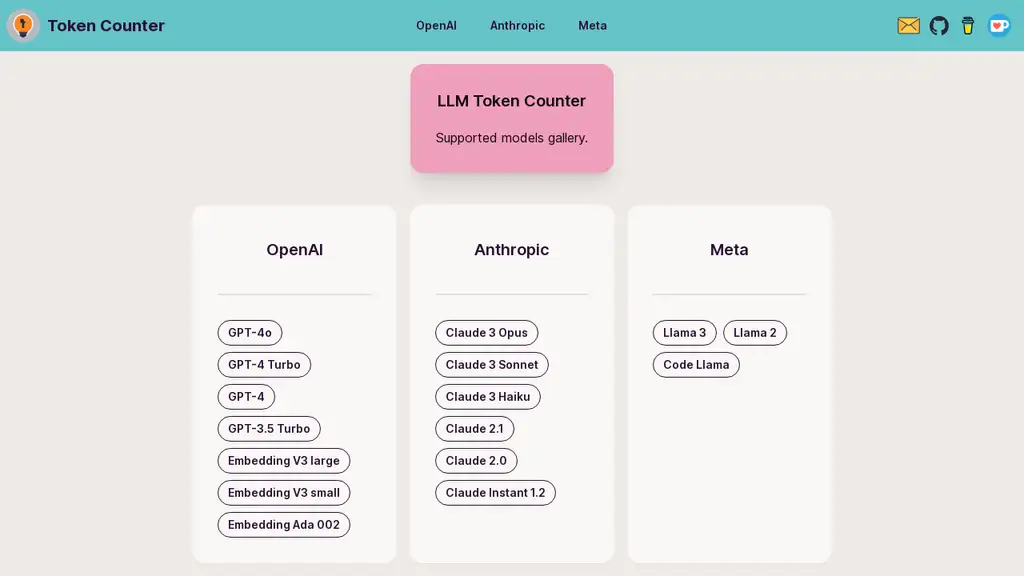

LLM Token Counter is a sophisticated tool meticulously crafted to assist users in effectively managing token limits for a diverse array of widely-adopted Language Models (LLMs), including GPT-3.5, GPT-4, Claude-3, Llama-3, and many others. The tool is designed to help users ensure that their prompts stay within the specified token limits of these models, thereby preventing unexpected or undesirable outputs. This makes it an essential utility for anyone working with generative AI technology, providing a seamless and efficient way to manage token usage across various models. The tool's capabilities are continually being expanded to support more models and enhance user experience further. Users are encouraged to provide feedback or suggest additional features via email, ensuring the tool evolves to meet their needs effectively. By leveraging LLM Token Counter, users can optimize their interactions with advanced language models and achieve more consistent and reliable results.

LLM Token Counter operates using Transformers.js, a JavaScript implementation of the renowned Hugging Face Transformers library. This allows tokenizers to be loaded directly in the user's browser, enabling client-side token count calculations. The efficient Rust implementation of the Transformers library ensures that these calculations are performed with remarkable speed. Importantly, this client-side approach guarantees data privacy, as prompts are never transmitted to any server or external entity. Users can be confident that their sensitive information remains secure and confidential while using the tool. The commitment to data privacy and security, combined with the tool's robust functionality, makes LLM Token Counter a valuable asset for anyone working with language models.

Not reviewed yet

Supports multiple popular LLMs

Client-side token count calculations

Utilizes Transformers.js library

Ensures data privacy and security

Fast and efficient Rust implementation

Users can prevent exceeding token limits in prompts, avoiding unexpected outputs.

Ensures data privacy by performing calculations client-side.

Optimizes interactions with LLMs for more reliable results.

Supports a variety of popular language models.

Encourages user feedback for continual tool enhancement.

No promo codes available

hantian.pang@gmail.com

6/2/2024

For social proof, the following badge embedding HTML code can be copied onto the tool website's homepage or footer. Badges can validate the tool to potential customers.

Optimize token usage for OpenAI models efficiently

No-code platform for fine-tuning and evaluating LLMs

Open-source monitoring and analytics for AI agents

Compare pricing of various LLMs easily.

Build and control personal LLMs with ease.

Discover, download, and run local LLMs effortlessly.